Last updated: 7 September 2022: David Green

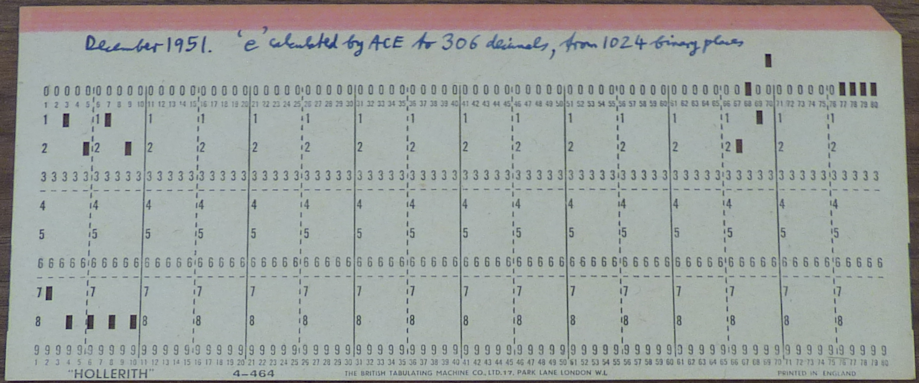

Amongst the Woodger papers in the Wroughton archives is a box of punched cards including some cards with e punched to 306 decimal places. They are dated December 1951.

The program is also mentioned in Woodger's 1958 article. There he records that "By the end of 1951 nine long delay lines were in service. The superiority of digital over analogue machines as regards accuracy was demonstrated by a program to calculate e to 306 decimal figures, treating the contents of one delay line as a single number of 1024 binary digits. While this particular tour de force was purely for demonstration the principle is important, and arithmetic using as many as four 32-digit words for each number has since been employed where this was the simplest way of overcoming extensive loss of significant figures through cancellation; one word would represent b and three words would represent a to 94 binary places, for the floating number a.2b"

That is all the information available. No code or flow charts or program notes have survived for the calculation of e or the arithmetic of four 32-digit words.

The procedure used to calculate e was probably to calculate the sum

It is convenient to start with the "2." understood and work out the fractional part in 1023 bits, starting with the sum equaling 1/2 (DL10 words 0-30 = 0, word 31 = P31 and assuming the decimal point is between P31 and P32) and noting that s(k+1) = s(k)/k.

True multiple precision arithmetic is not required to do this and I doubt it was used in the original. 1/176! is too small to register in 1024 bits, and so 176 is the largest divisor required. And only multiplication and division by 10 are needed for punching. So addition of two 32-word numbers is required, but only division of a 32-word number by a single word, and simple multiplication of a 32-word number by 10.

I have written code to replicate the calculation [code, flow chart]. The new program fills the eleven delay lines provided in the emulator. However, the program comprises two distinct parts: calculate e in binary, then convert the binary to decimal and punch the cards. It would be simple to separate the two parts to limit the use of delay lines to just the nine available in 1951.

To run the program on the emulator is straightforward. Load the program cards and press Initial Input. When the computer stops on {0 15-28} "load" some punch cards and single shot.

This computes the following value for e in delay line 10:

delay line(10) ( 0) 11101 00010 00101 01010 01110 11010 00 ( 1) 11000 10011 00101 11000 01111 00011 00 ( 2) 10101 10110 01011 10110 11110 01111 11 ( 3) 00101 01010 00000 10010 00101 11001 10 ( 4) 10110 00110 01110 10110 10111 00011 11 ( 5) 01001 00110 11000 01010 11110 10100 10 ( 6) 10111 10110 01001 11101 11101 10101 10 ( 7) 10110 01001 00011 11000 10000 01010 10 ( 8) 11001 11111 01110 00001 11101 01000 10 ( 9) 11101 10001 01011 00000 10000 00101 10 (10) 00000 11101 01110 01110 01010 01000 11 (11) 10011 10001 11000 11010 01000 11001 11 (12) 00010 11110 01100 10000 11101 10000 00 (13) 01101 00101 11000 11111 10011 10101 01 (14) 10111 11010 10000 11100 10100 00000 11 (15) 00000 10111 01111 10011 01110 10001 01 (16) 01110 10011 00010 00101 00000 11010 00 (17) 10001 10011 10101 10001 01000 10100 00 (18) 00000 10011 00010 01010 11010 10010 00 (19) 11001 01111 00011 01110 10101 01100 01 (20) 11010 00011 11110 00001 00000 01100 11 (21) 01111 11001 10000 10100 10110 00000 01 (22) 11000 10000 10010 01000 10111 11000 11 (23) 10011 10111 01101 01001 10011 10100 10 (24) 11100 11110 01100 11010 00101 00110 00 (25) 10011 10101 00010 11100 01101 11011 10 (26) 11111 10000 01110 01111 00111 11101 00 (27) 01011 11110 00011 10101 11001 00011 01 (28) 11000 11110 01111 00101 11100 11100 10 (29) 00000 01000 11010 10001 11011 11110 10 (30) 10101 10010 10100 10110 11101 01000 10 (31) 10001 10100 01010 10000 11111 10110 10

Here the least significant bit is P1 in DL100 and the most significant bit is P31 in 1031 (representing ½, with the decimal point assumed between P31 and P32).

"http://numberworld.org/constants/E 132877206 Hexadecimal Digits.zip" contains rather more binary digits (132877206) than are needed to check the result. Comparing their data with 100 shows

numberworld: 11000 01101 10111 11001 00100 11001 10

this result: 00010 11101 10111 11001 00100 11001 10

^^^^^ ^

a rounding error of 6 bits (ie. 101001: decimal 37)

The result, correctly rounded and punched on ten cards, is

2.7182818284590452353602874713526 62497757247093699959574966967627 72407663035354759457138217852516 64274274663919320030599218174135 96629043572900334295260595630738 13232862794349076323382988075319 52510190115738341879307021540891 49934884167509244761460668082264 80016847741185374234544243710753 9077744992069551703

https://www.math.utah.edu/~pa/math/e.html shows the last twenty

digits as

90777449920695517027

The NPL cards show just the fractional part of 'e', punched 9 digits per card on 34 cards. Presumably they used a standard punch routine. Card 1 has .718281828

and card 34 has 069551702, presumably truncated rather than rounded.

The program would not have been run often and there would have been no reason (other than pride?) to strive for perfection. But we don't have the code so we cannot know how carefully it was coded. I have tried to optimise a couple of aspects that greatly reduce the machine time but not bothered to finesse the code further:

---

©2020 David Richard Green. All rights reserved.

dgreen@uraone.com